Perflexity has unveiled the new Perflexity Computer, a product designed for general-purpose digital workers.

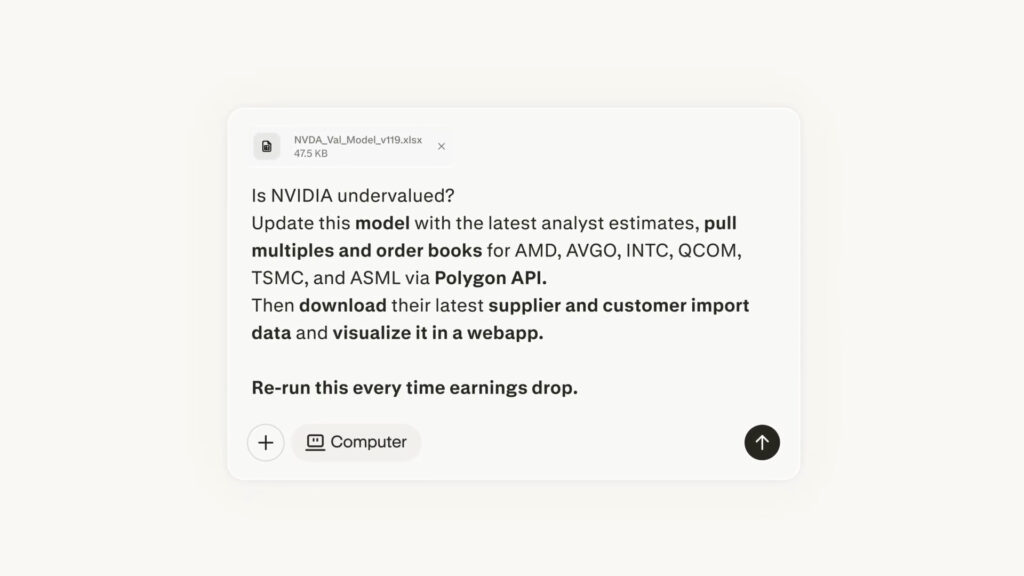

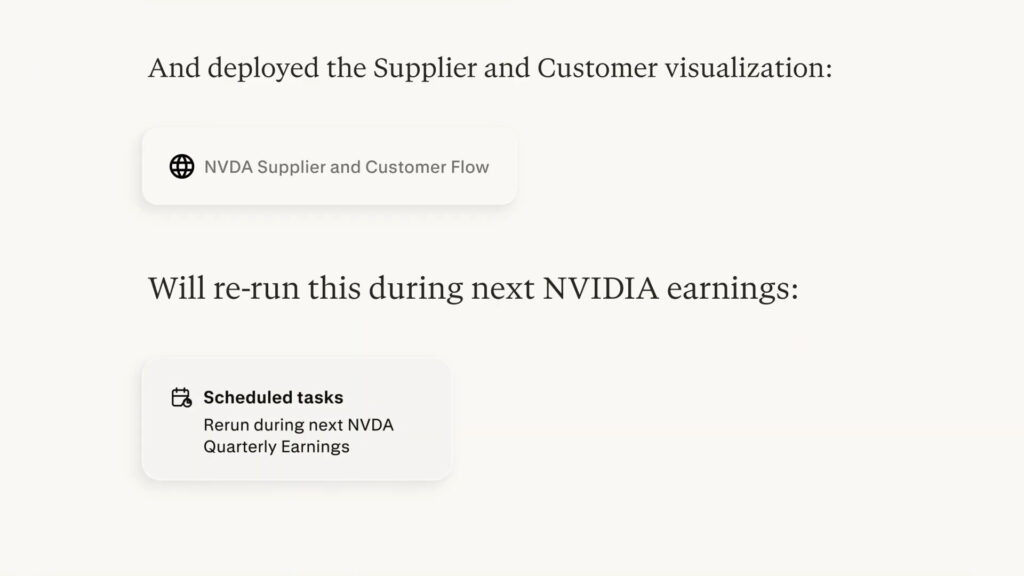

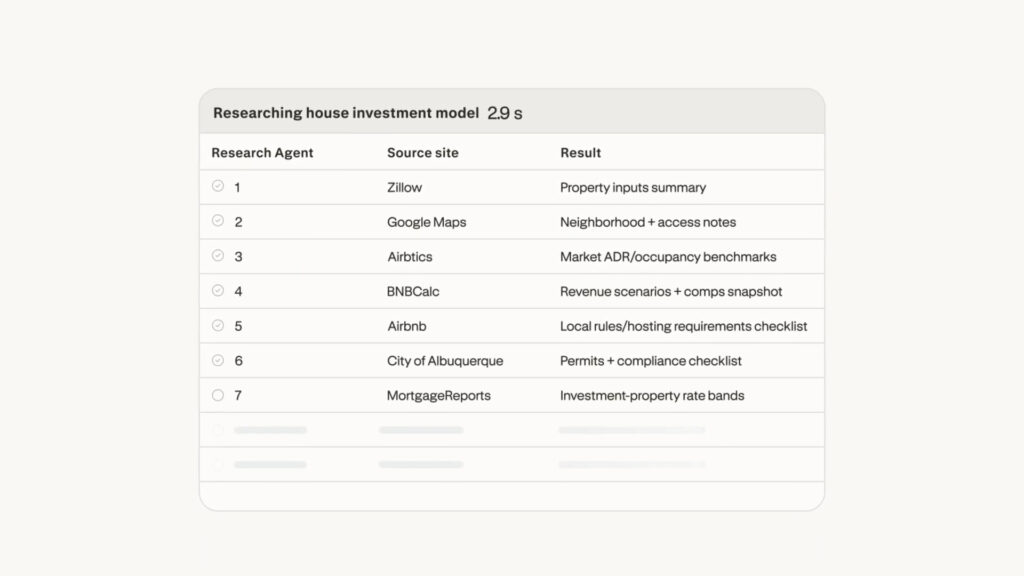

Perflexity Computer was introduced as a system that creates and executes entire workflows, going beyond search and answer-centric chat. When a user describes their desired outcome in natural language, the system breaks the task down into sub-tasks, creates sub-agents to execute each sub-task, and processes them in parallel. It is structured to produce actual results by combining tasks such as web research, document creation, data processing, API calls for connected services, and coding.

According to the company, the computer directly manipulates the software stack like a human colleague. It performs reasoning, delegated search, building, memory, coding, and delivering results as a single flow, and if a bottleneck occurs, it can create new sub-agents for troubleshooting. The company stated that the execution environment takes place in an isolated computing space and is designed to access integration with actual file systems and browser tools.

The core message of this announcement is multi-model orchestration. Perflexity argued that, contrary to the view that models are becoming generalized, model specialization is progressing, requiring a combination of various frontier models for a complete workflow. Perflexity Computer explained that it deploys Claude Opus 4.6 as the core inference engine, Gemini for deep research, Nano Banana for images, Beo 3.1 for video, Grok for light task speeds, and ChatGPT 5.2 for long contextual recall and extensive search.

Perflexity announced that through a model-independent structure, it will allow users to change batch configurations or select models for specific subtasks as the model evolves over time. Given that costs and token budgets are realistic constraints, the company aims to provide users with choice and control.

The service was launched with an initial release for Perflexity Max subscribers. The company announced plans to make it available to Enterprise Max users in the near future.